When Right Is Still Wrong

In 1999, two NASA systems were both “right” and still lost a spacecraft. In 2026, another NASA mission was so precisely on course that a planned correction burn was not needed. NASA learned that lesson the hard way. The uncomfortable part is that the same issue is arguably more relevant today, and many organisations still have not fully accounted for it.

Artemis: Precision that prevents work

The Artemis II journey around the moon was managed in stages. A critical one was the Trans Lunar Injection—a 349-second burn using 450kg of propellant that doesn’t aim the craft toward the moon, but where the moon will be in four days. Three correction burns were planned. Only the second one was needed.

Mars: When “right” goes catastrophically wrong

In 1999, the Mars Climate Orbiter was lost when it entered a lower-than-planned orbit. Both ground-based and onboard computers calculated identical navigation results for thruster impulses. The problem? One used US Customary Units (pounds-force seconds) while the other expected SI units (Newton-seconds)—a factor of 4.45 difference.

The financial cost was estimated at $125m. The scientific loss was incalculable. And yes, it could have been avoided.

The real problem was shared meaning, not maths

Both systems worked perfectly. They just didn’t speak the same language. This wasn’t arithmetic failure—it was a boundary failure where meaning changed without anyone noticing.

This pattern repeats in business every day

A purchase order, invoice, and warehouse record can all say “10 pallets” and still disagree operationally. There are six ISO pallet standards. UK pallets, Euro pallets, and US GMA pallets aren’t interchangeable.

The same applies to pack, case, carton, unit, each. They sound precise until money, inventory, or delivery depends on them. Tobacco companies sidestep this elegantly: they reconcile on “sticks” (individual cigarettes) rather than variable packs and cartons. Base units matter more than convenient labels.

Why this matters more now than ever

The old data quality argument was about better dashboards. The new one is about protecting decisions made by software acting on your behalf.

AI agents promise automated invoice matching, approvals, exception handling. But if one supplier invoices by case, another by pallet, and another by each, the system still needs explicit mappings and conversion rules. Without them, it’s not reasoning—it’s guessing.

Data access ≠ business understanding

Vendors show beautiful demos where models connect seamlessly to enterprise data. But most organisations don’t run like demos.

Over time, systems accumulate custom tolerances, supplier quirks, local workarounds. These live in configurations, spreadsheets, tribal knowledge—not clean data models. A model connected via MCP can read your invoices but won’t automatically understand why your warehouse tolerates certain short shipments or why “acceptable mismatch” varies by supplier.

Even experts need operational grounding

NASA didn’t lack brilliant engineers after Mars Orbiter. They needed shared definitions across boundaries. Vendors don’t lack technical expertise. They may lack your operational context.

A world-class team fails when assumptions aren’t aligned. An impressive AI solution underperforms when it doesn’t understand what your organisation really means by case, pallet, tolerance, or short shipment.

Two operational futures

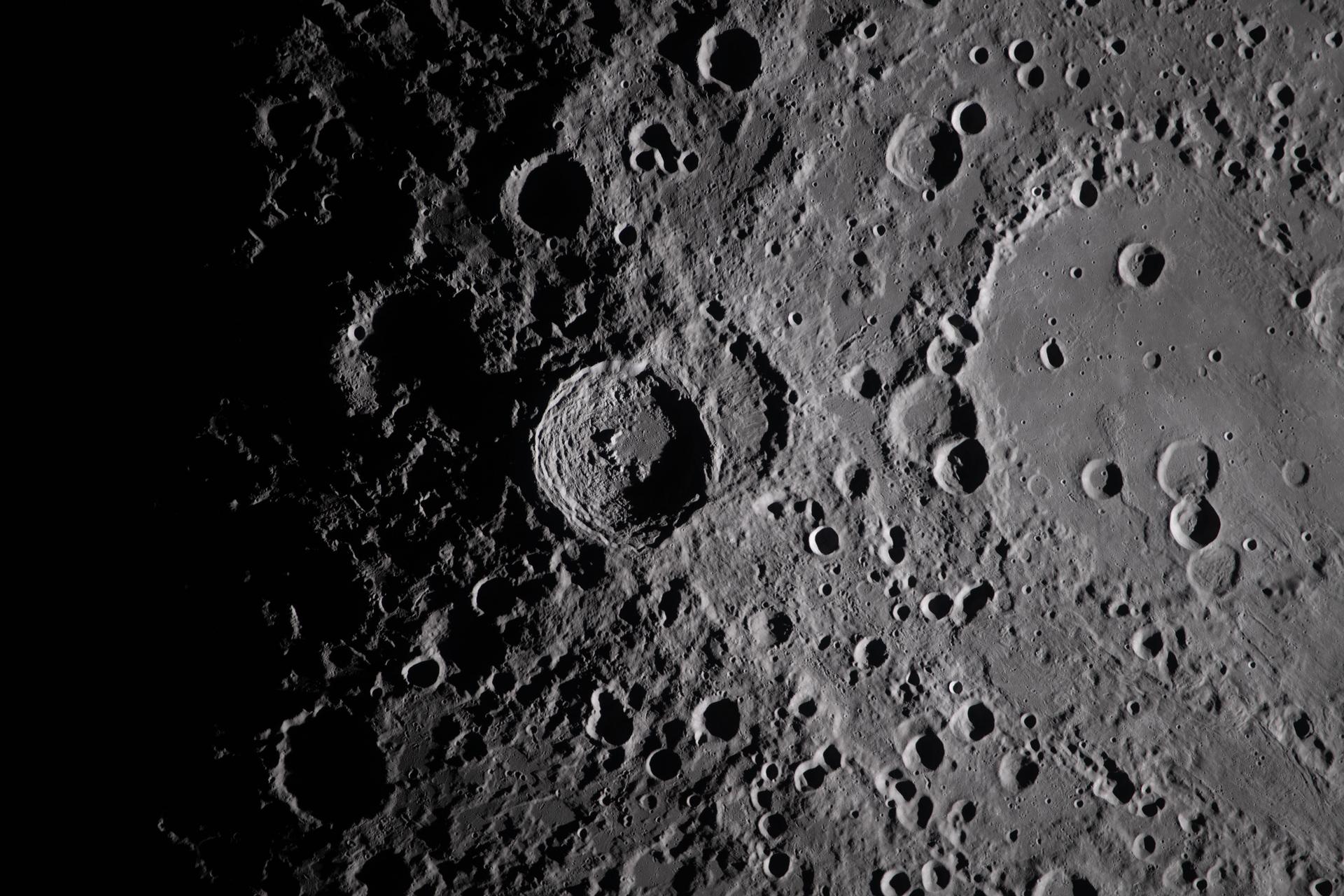

Artemis and Mars aren’t just space stories. They describe what happens when:

- Precision is engineered upfront → systems need fewer corrections

- Meaning breaks at boundaries → systems proceed confidently toward wrong outcomes

Modern enterprise AI sits between these futures.

Questions that matter more than the demo

Before trusting AI to match, approve, or order:

- Do all parties mean the same by quantity, unit, pack, case, pallet?

- Are supplier tolerances and conversions governed centrally, not in spreadsheets?

- Can the system explain its matching decisions in business terms?

- Does it understand your real procedures, or just the demo version?

The bottom line

Data quality isn’t new. What’s new is trusting intelligent systems to act on data whose meaning was never properly defined. Precision still needs to be engineered. Shared meaning doesn’t emerge by magic.

Otherwise you get the oldest failure in computing: sophisticated systems doing the wrong thing, perfectly.